Yesterday we tested Imagga’s deep learning algorithms against 6 others. The results were quite good and we believe our tech is doing an amazing job recognizing everyday objects with impressive accuracy.

Looks like there’s another interesting test of various image recognition technologies, this time performed by Jack Clark for Bloomberg Business with several challenging images.

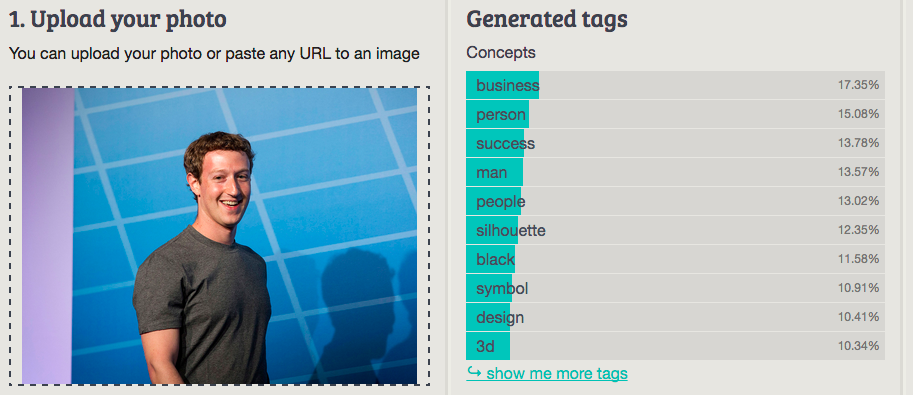

It’s quite fascinating to run the same images, the author has originally used, via the Imagga image tagging demo to find out how we perform as well. Imagga tech definitely thinks Mark Zuckerberg is more than a cardigan, but still tags him with а few trivial words (see the results below). May be the subtitle of the article would be different if Imagga’s image tagging was considered, but the ‘Best answer’ section would be a bit boring 😉

Let’s have some fun now (feel free to use Imagga auto-tagging demo to test with your own images):

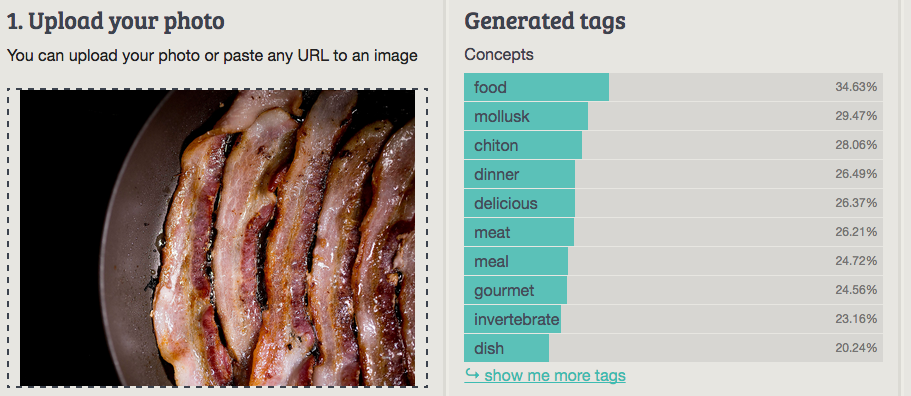

Imagga: food

Best answer: Fat (Clarifai)

Worst answer: Slug/Churros (MetaMind, demo version)

Imagga: business

Best answer: Cloth/Zuckerberg (Orbeus)

Worst answer: Cardigan (MetaMind, demo version)

Imagga: cat

Best answer: Cat—everyone got this. After all, the Internet is made of cats.

Imagga: mountain

Best answer: Mountain (Orbeus)

Worst answer: Vehicle/Scene (IBM Watson Visual Recognition, beta version)

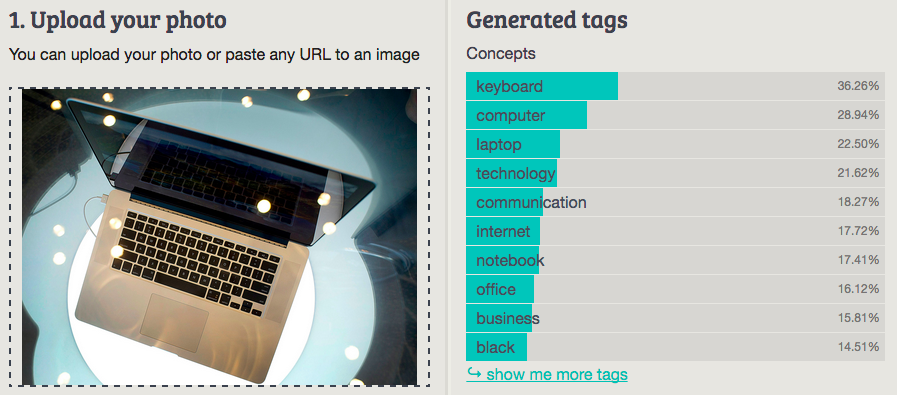

Imagga: keyboard

Best answer: Technology (Clarifai)

Worst answer: Photo/Object (IBM Watson Visual Recognition, beta version)

Have an amazing idea but felt sceptical about image recognition? Give Imagga’s super easy to use API a try and you’ll change your mind 🙂