Metals Hackathon in Vienna and Industry 4.0 Opportunities for Image Recognition

If you read the technology news of the day, it looks like everybody is talking very seriously about AI. The awareness this creates is excellent, but it’s even better when companies from more traditional industries take up the task to experiment and find ways to apply AI into their daily operations. Companies from medical & health industries, metallurgy are finding ways to use AI and regain a competitive edge.

We’ve participated in Digital Metals AI Hackathon organized in Vienna by Pioneers.io and accepted the challenge to build an exciting new solution for AI-enabled measurement of metal parts. The goal of the hackathon is to generate the best ideas and create new solutions in a rapid prototyping format.

Voestalpine and Primetals were the hosts of the event. By bringing together industry leaders and the best technology leaders from the startup world, the two industrial companies hoped to solve specific real-life challenges and identify potential collaboration opportunities for the future.

There were four predefined challenges that the participating startups need to address. We took part in one of the cases of Voestalpine: Measuring Steel Pieces with a mobile app. The Metals Division of Voestalpine, the market leader for tool steel, is focused on producing and processing high-performance materials. High-speed steel, valve steel, as well as powder materials, nickel-based alloys, titanium, and components, are produced using additive manufacturing technologies.

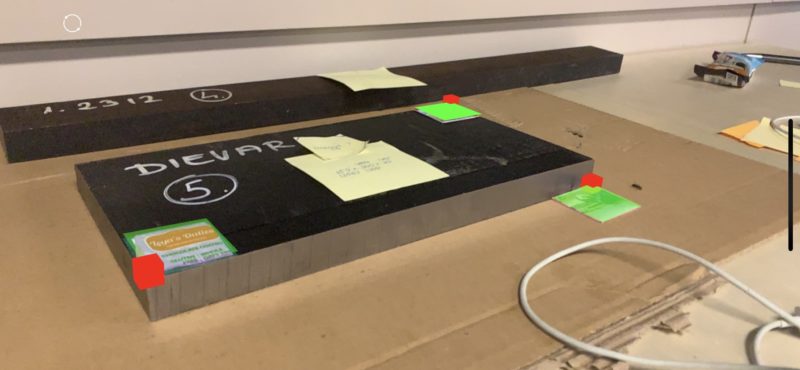

The goal of the challenge is to develop a mobile app that uses the camera for dimension-measuring for steel parts. The app has to be able to measure the three dimensions of rectangular Voestalpine steel pieces using a photo or video. The use of an app will make the process more effective and less prone to errors.

The way measurements are currently conducted is by measuring three dimensions with a tape measure and manually typing them in via a keyboard. It turns out to be time-consuming, leading to mistakes and causing frustration. The metal pieces that need to be measured are quite heavy and impossible to be moved around for better access or more convenient measurements.

The atmosphere at the event was excellent, with funny ice-breakers, cozy, working areas, and mentors form the metal industry companies present at all times.

We were able to build an impressive rectangular steel piece measuring MVP using the Apple AR Kit, apply computer vision and computational geometry know-how to recognize markers in 3D space in less than a day.

As demonstrated in the short video below, once the markers (we used parts of protein bars packaging as a set of improvised markers ;)) are positioned around the steel piece, the only thing the operator needs to do is to point the camera to each of them until it’s detected and then move to the next one. Once all three markers have been visible (sequentially, not needed to be at the same time as it’s not practical for long pieces), the system suggests the dimensions of the items in millimeters.Of course, the operator may go in manual mode and adjust the measurement if needed.

https://youtu.be/7Wn_l9aZdkY

This whole prototype was built in less than a day after we found out the first evening that a solution trying to have all markers visible in a single shot won’t be practical for a long piece.

Our solution turned out to be promising. Some of the participants and judges couldn’t believe it might work even without full visibility of the three markers.

We are exploring more practical applications of image recognition for smart factories. We believe we have just scratched the surface when it comes to automation and augmentations possible with current AI technologies.

Below is a video demonstrating how our visual search technology can be used for recognizing different machine parts, something of enormous value when some repairing or replacement is needed, and there is a vast catalog of possible inventory.

https://youtu.be/z95S2Ga2jiU

Press Release: Imagga Launches the First On-Premise Solution

Imagga launches the first ever on-premise solution for visual A.I.

San Jose, CA. May 10, 2017. Imagga, a premiere PaaS provider of visual recognition now offers its image intelligence solution for deployment on private, local servers. Companies whose data cannot be shared in the cloud for various reasons can now fully benefit from Imagga’s award-winning content recognition technology.

With the introduction of its on-premise solution, Imagga breaks a new innovation barrier. Companies can now have access to best of breed deep-learning content recognition technology without ceding confidentiality of their data. While Imagga’s cloud-based solution already offers extremely high level of security and privacy, its on-premise solution goes a step further, for when data just cannot leave local servers.

“Our on-premise offering is the ultimate data control solution for companies like private cloud, law enforcement, medical, legal, telecom and highly sensitive corporate content. Installed on a local server, Imagga’s visual content recognition can process billions of images, all without leaving the company’s premises, “ says CEO and co-founder Georgi Kadrev. ”It delivers the highest degree of data security and privacy. No other image A.I. company in the world offers this level of control and security.”

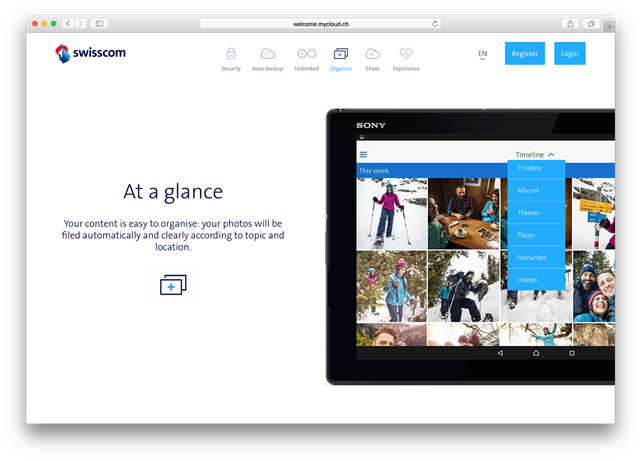

Swisscom, an advanced user of the Imagga’s on-premise solution, regularly processes huge volume of images on their own servers: “Imagga impressed us with the quality of the recognition technology, recognizing both objects and broader scenic categories,” says Andreas Breitenmoser, Project Manager at Swisscom A.G.

Imagga’s on-premise solution can be deployed quickly and seamlessly on any servers with the appropriate technical requirements. The company has been working closely with NVIDIA, the leader in GPU high-performance computing, to optimize all its software to run smoothly and efficiently on NVIDIA GPU servers with Tesla and Pascal architectures.

Highly scalable, the on-premise solution delivers the same level of accuracy as the existing cloud offering, currently deployed by over 9,000 developers worldwide and implemented by companies like Cloudinary, Unsplash, Tavisca, KIA Motors, and Plex TV to process several billion photos and videos in the last year alone.

About Imagga

Imagga (https://www.imagga.com/) is an Image Recognition Platform-as-a-Service providing Image Tagging APIs for developers & businesses to build scalable, image and video intensive apps. The company has been recognized by IDC as one of the top innovators in its space for the year 2016. Built for scalability and easily deployed it offers auto-tagging, auto-categorization, smart-cropping, color analysis, content monitoring as well as custom classification.

For more information, please contact:

Georgi Kadrev

georgi@imagga.com

Paul Melcher

paul@imagga.com

+1 917 304 3875

Media Files

Imagga logo - https://goo.gl/hgqBkE

Georgi Kadrev, CEO photo - https://goo.gl/BCgj43

소개 / 기술 개요

Imagga는 개발자와 기업을 위한 사진 및 비디오를 자동으로 태깅 하는 클라우드 API 플랫폼을 제공하는 업체입니다 . Imagga의 기술은 기업이 보유한 대규모의 사진 혹은 비디오를 능동적으로 분석해 분류 및 관리를 할 수 있습니다.

https://youtu.be/XoZAW3XXgDc

Imagga는 머신 러닝과 CNN(Convolutional Neural Network 컨볼루션 신경망) 알고리즘을 통해 이미지를 정교하게 분석하고, 최적의 검색 결과를 도출하는 솔루션을 개발합니다. Imagga의 API를 이용하면 기업은 자신들의 이미지와 비디오 컬렉션을 분류하고 이로 인해 시각 컨텐츠를 현금화 할 수 있습니다. 또한, 이미지에 주석을 달 필요 없이 이미지 키워드 검색이 가능해져 사용자 경험이 향상됩니다.

기술 하이라이트

- 딥 러닝을 통한 이미지 자동 태깅 & 분류 (CNN)

- 클라우드 또는 온프레미스 기반의 RESTful API 솔루션

- 개인의 이미지 세트/카테고리로 교육 가능한 시스템 ( 커스텀 트레이닝)

- 대용량의 시각 콘텐츠를 분석 할 수 있는 솔루션

- 50개 이상의 언어 지원(한국어 포함)

견적서를 받거나 혹은 미팅을 하시려면 연락 주십시오.

Imagga의 이미지 태깅 기술로 무엇을 할 수 있나요?

- 한눈에 놀라운 정확도와 완벽한 자동화된 대용량의 사진을 태깅

- 이미지 와 비디오 카테고리로 정리 - 알고리즘을 개인의 고유 데이터셋으로 교육을 시킬 수 있습니다 - 커스텀 트레이닝을 통해 개인 데이터 셋을 관리 할 수 있습니다

- 개인 소유 이미지 콘텐츠를 이용해 사용자 프로파일링을 할 수 있습니다.

- 이미지 검색을 향상 시킬 수 있습니다 - 컨텐츠에 키워드를 더할 수 있습니다.

태깅 API

Imagga 태깅 API는 사진의 픽셀을 분석해 물체를 탐지 하거나 특징을 추출 해 낼 수 있습니다. 사진을 대표하는 물체와 컨셉을 분석해 20가지 이상의 키워드를 추천해 줍니다. 여러 기업들은 이 키워드를 여러가지 방법으로 활용하고 있습니다. 그 예로, 사진 검색 기능을 추가 하고, 사용자 프로파일링을 하고, 트렌드를 분석 하는 방식으로 사용한 사례가 있습니다.

자동 태깅 API를 경험해 보시고 싶으시면 아래 링크로 접속 해 주세요

카테고리 API

카테고리API는 키워드 보다는 큰 분류 자로 사진을 분류 할 수 있는 기술 입니다. 모든 사진을 자동으로 정리 해 더 편리하게 사진을 찾을 수 있는 방법을 제공합니다. 개인 클라우드 저장소같은 곳에 저장해 놓은 사진을 더욱 쉽게 찾을 수 있습니다. 모든 사진을 일일이 확인해 찾는 방식이 아니라 "해변", "파티", 같은 카테고리로 사진을 찾을 수 있습니다.

자동 카테고리 API를 경험해 보시고 싶으시면 아래 링크로 접속 해 주세요

커스텀 트레이닝

커스텀 트레이닝을 통해 고객들은 자신의 필요에 맞게 키워드 혹은 카테고리를 정할 수 있습니다. 커스텀 트레이닝을 통해 고객들은 더 높은 정확도와 자신들만의 특별한 키워드를 지정 할 수 있습니다.

커스텀 트레이닝의 방법은 아주 간단합니다. 고객님이 카테고리와 그 카테고리에 맞는 샘플 데이터를 제공해 줍니다. 그 데이터를 이용해 시스템을 교육 시킵니다. 새롭게 생성된 분류자는 API에 쉽게 적용이 되 기존에 있는 시스템과 작업에 간단히 설치 할 수 있습니다. 이 방법은 사진에 주석을 달거나 분류를 수동으로 하고 있었던 기업에게 아주 적합합니다. 기존 분류 된 컨텐츠는 분류되지 않은 나머지 컨텐츠에 대한 학습 데이터 세트로 사용될 수 있습니다.

커스텀 트레이닝에 대해 더 자세히 알고 싶으시면 아래 링크로 접속 해 주세요

온프레미스 솔루션

저희가 이미지 인식 회사로서 최초로 발표한 온프레미스(사내 서버 직접 설치) 솔루션은 클라우드 버전과 동일한 신뢰성과 결과를 줍니다.

고객의 하드웨어와 OS가 최소 조건을 충족시키면 설치가 가능 합니다. 처리 할 수 있는 사진 개수와 처리 속도는 개인 서버 사이즈에 의해 결정 됩니다. 처음 설치를 할 때 비용과 시간이 더 소요 되지만, 직접 개발 하는 비용에 비해 훨씬 더 효율적입니다.

아직도 저희가 어떻게 도움이 될지 확신이 안 서십니까? 저희 대표 사례를 보시고 결정하세요.

기아 자동차

기아 자동차는 K5차량의 쇼케이스 캠페인을 진행했습니다. 개인의 라이프 스타일에 맞는 K5 모델을 자동으로 추천하는 형식으로 진행했습니다. 36가지의 다른 라이프 스타일에 맞는 옵션을 제공하였습니다. 차 엔진과, 색상 그리고 다른 디자인에 의해 추천을 완료 했습니다.

Imagga의 기술은 이 캠페인에서 개인의 SNS 사진을 분석하는 코어 기술을 제공했습니다. SEEDPOST와 협력을 통해 캠페인에 적합한 프로파일링 엔진을 만들었습니다. Imagga의 자동 태깅 API를 이용해 SEEDPOST는 이용자의 성향과 일치하는 K5모델을 자동으로 추천하는 엔진을 성공적으로 만들었습니다.

사용자 프로파일링에 어떻게 기술이 적용되었는지 궁금하시면 연락 주시기 바랍니다.

스위스콤

Imagga의 API는 스위스콤의 개인 클라우드 저장소에 온프레미스 솔루션으로 설치되었습니다. Imagga의 기술은 클라우드 저장소에 사진 검색 및 찾아보기 기능을 추가하는데 사용되었습니다. 저희의 기술은 실 이용자들에게 사진 검색과 찾아보기 기능을 제공하며, 이 기술로 인해 실제 프로그램을 사용하는 시간과 이용자를 증가 시켰습니다.

이마가의 온프레미스 솔루션에 대해 더 정보를 얻고 싶으신가요? 연락 주십시오.

Image Detection Bots and Imagga

Looks like 2016 will be the year of bots and almost all of them pretend to use some form of machine learning to do their job even better. Microsoft just released an image detection bot called CaptionBot. As all related to image recognition via machine learning, the bot does quite a good job detecting some concepts and quite a bad when it comes to others.

We always find it fun to compare image recognition services and see how Imagga performs with photos used by users. This blog post was provoked by a friend who posted this on Facebook:

It’s about an article on TNW about Microsoft CaptionBot written by Natt Garun and it’s failure to deliver consistent results. We love Microsoft and even use Azure and Microsoft's Translation tools to improve the language support for tags, but it’s quite tempting to run the test and share.

We’ve run the same photos included in the article through Imagga API demo, and that’s what our image recognition thinks about it. It’s still not perfect! Have fun exploring the results.

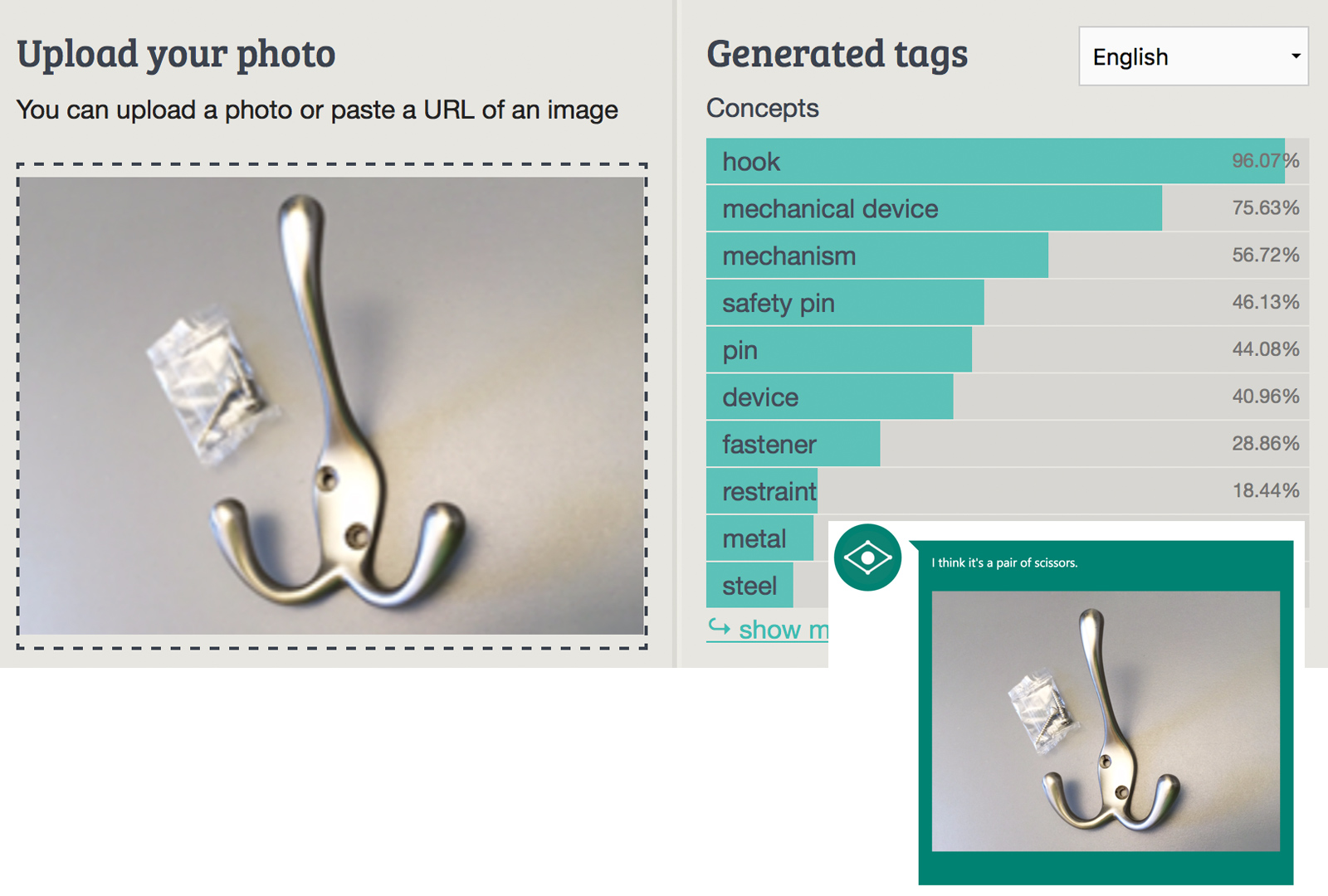

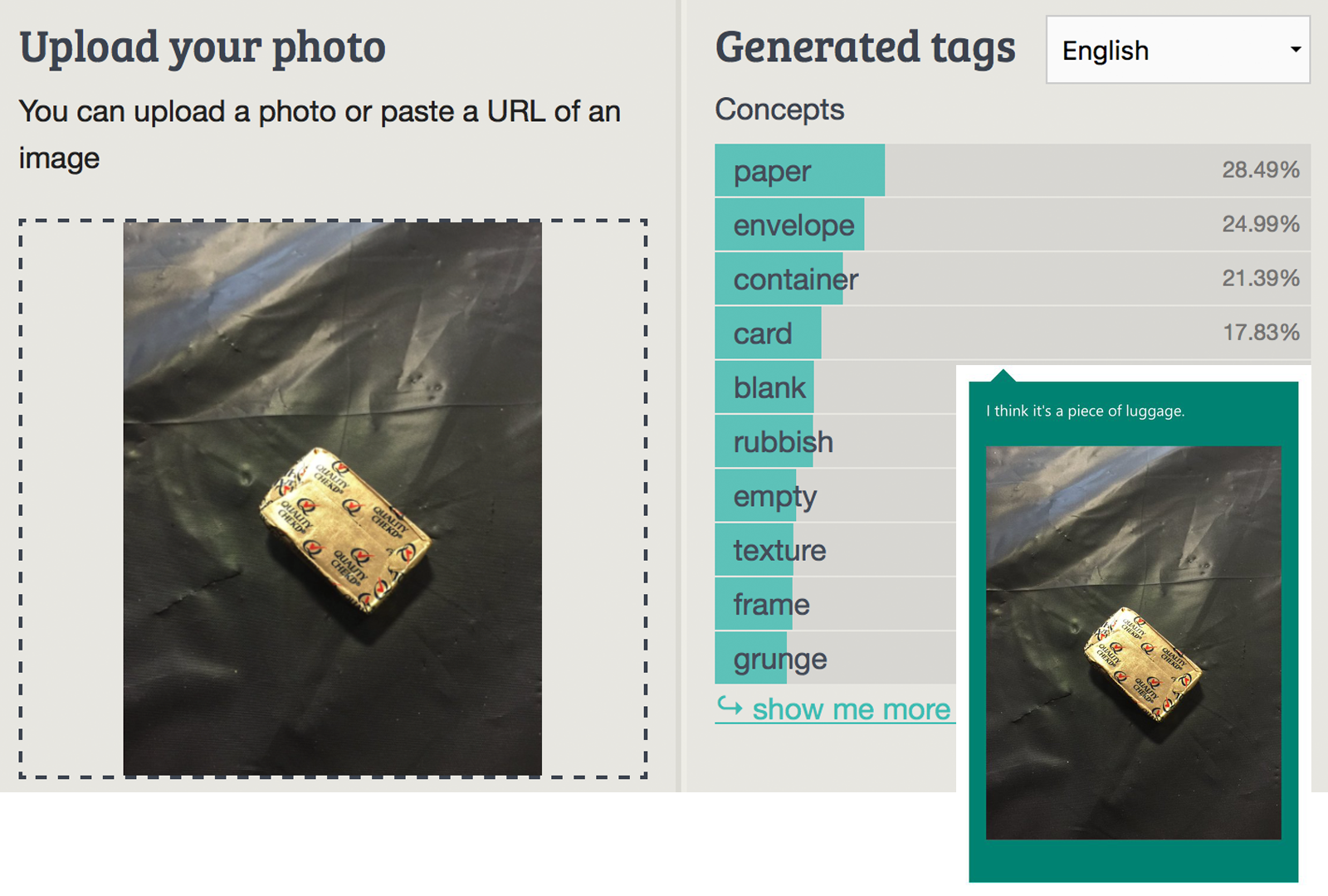

The first photo is of a home metal hook:

We've nailed that one, seriously!

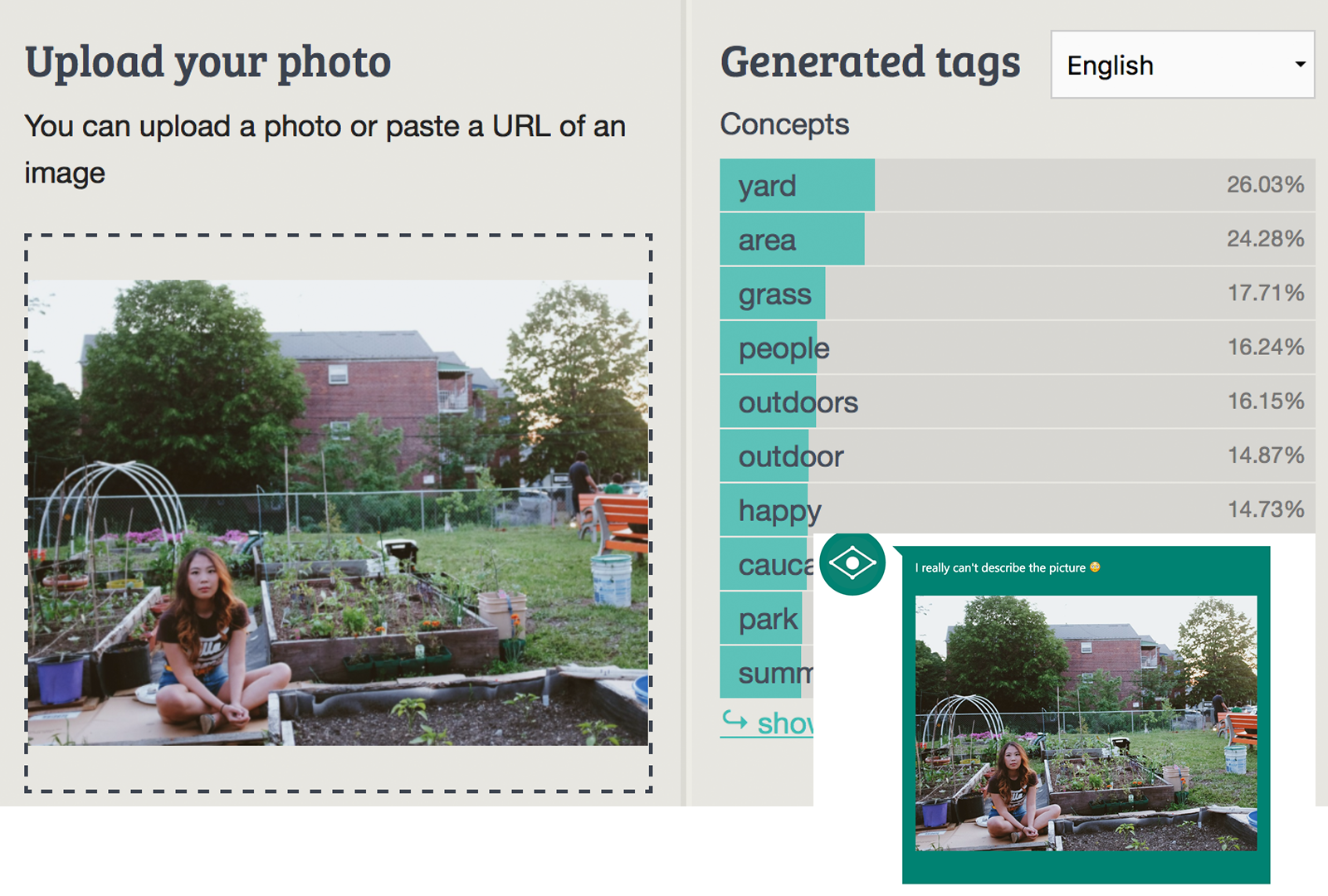

Obvious description of the photo will be something like Beautiful Asian girl siting in a vegie garden. We are quite close to it with tags like yard, grass, outdors, people. Well, we didn’t figure the rase properly, but that’s something we are cureenlty working on and lots of imporvements coming in couple of months.

Obvious description of the photo will be something like Beautiful Asian girl siting in a vegie garden. We are quite close to it with tags like yard, grass, outdors, people. Well, we didn’t figure the rase properly, but that’s something we are cureenlty working on and lots of imporvements coming in couple of months.

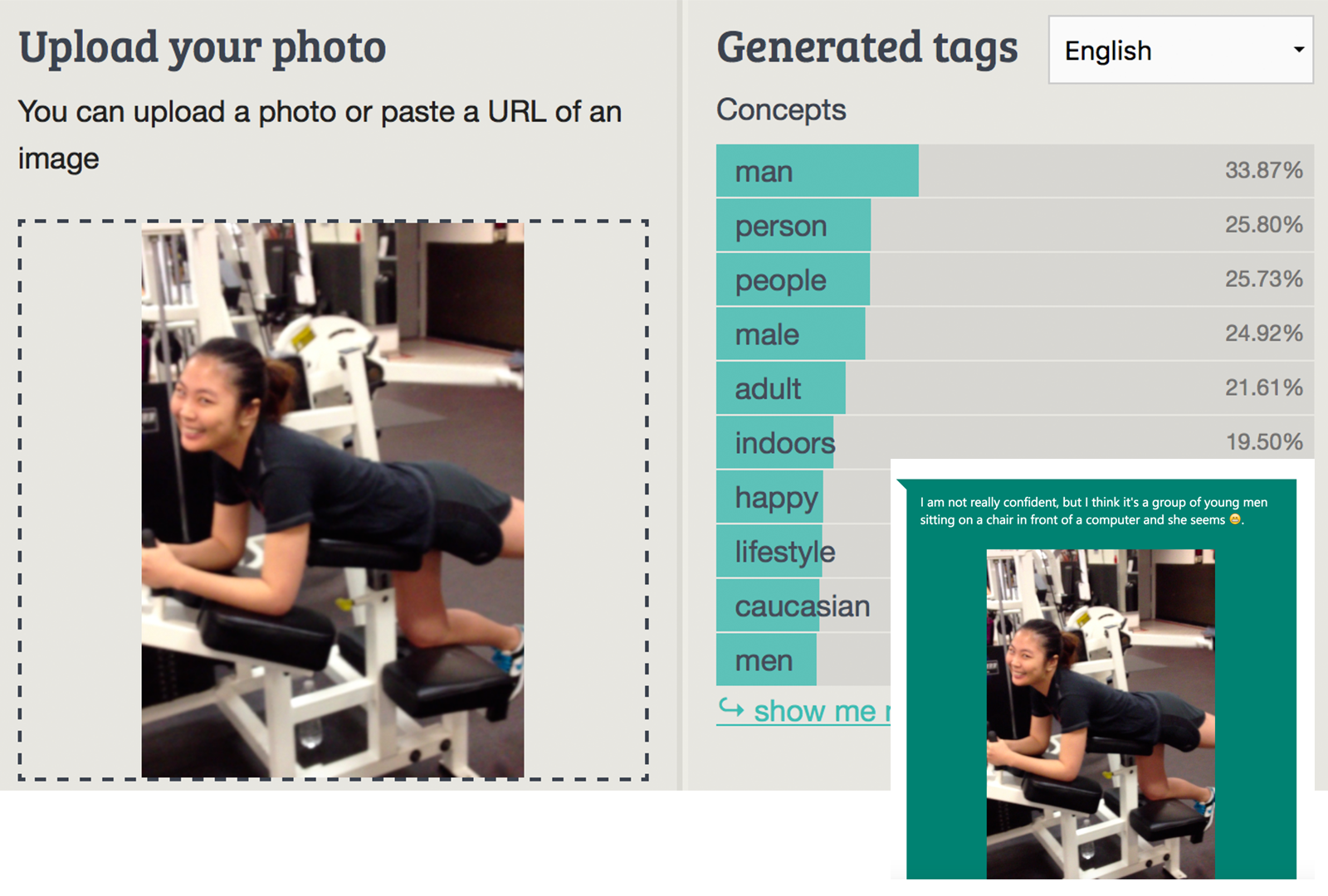

There’s something about that photo that makes it difficult for the tech to figure out. We didn’t go exeptionally good job here. Well, at least we tagged with happy, witch is obvious from the shaning face of that Asian woman.

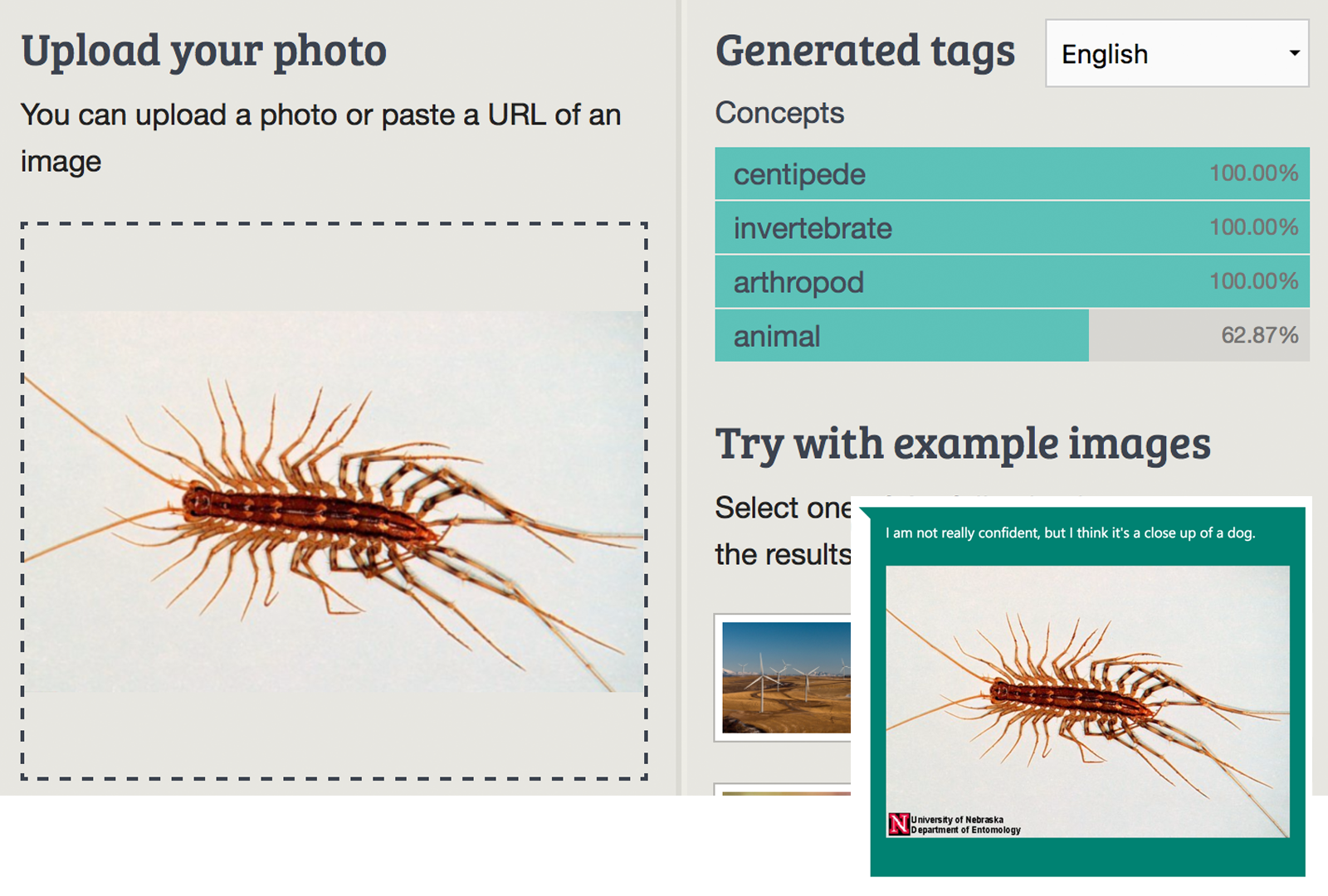

It’s obvious we do well with living creatures. Thanks good we do not need to figure out if they are male or female and how happy they are.

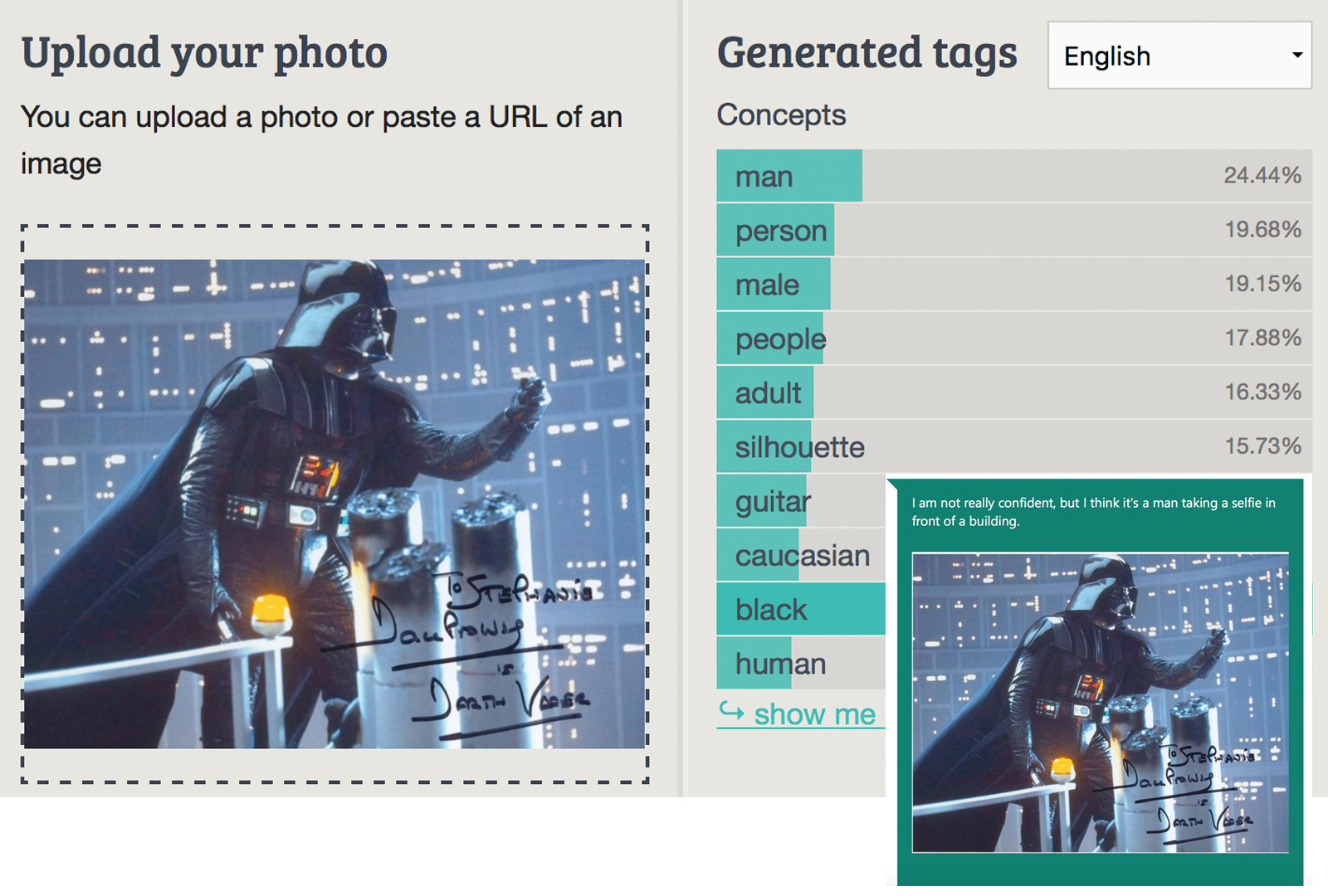

This one is quite dificult as it brings alot of contect, that the machine need to know about. We haven’t trained for movie characters, but tags are in general good. Well, we can argue about the guitar - if you have wild imagination you can definately picture Darth Vader with guitar. Human do not apply much in this case, and we are happy with the low confidence level ;-)

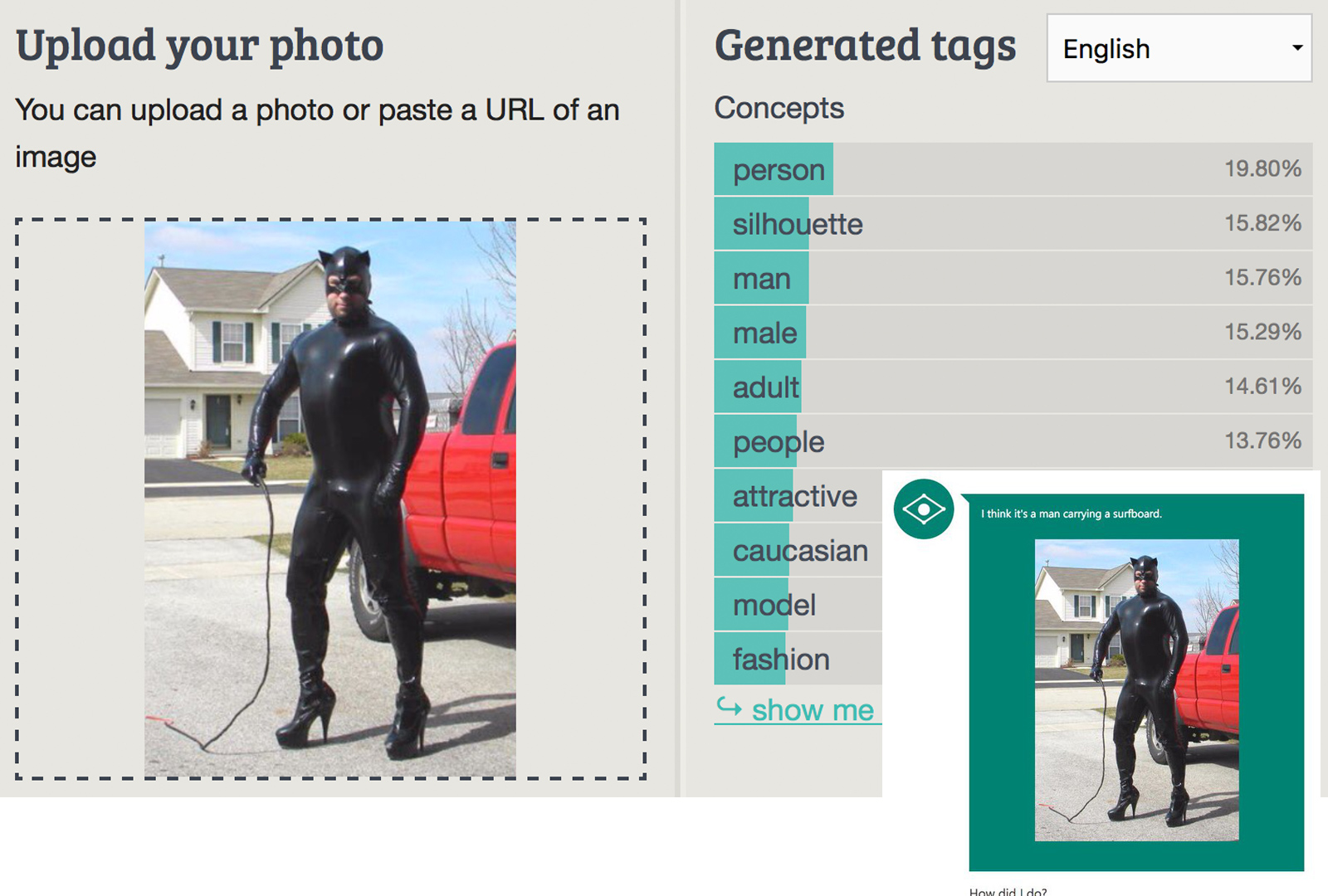

Ever wondered where do people come up with ideas for testing? This one is classic. Definately no surfboard and yes, it’s an attractive fashionable male model ;-)

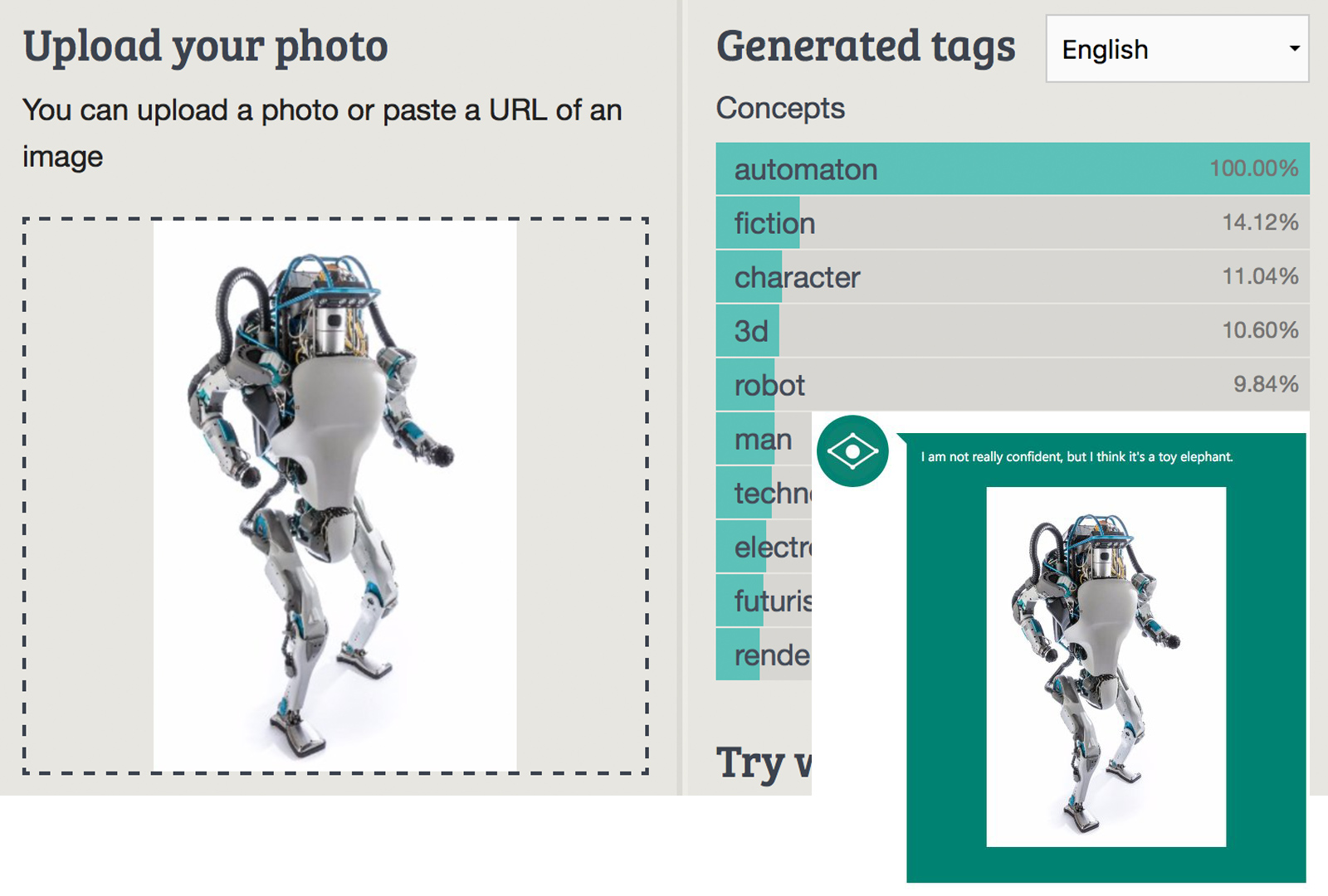

Toy elephents may look like that in the forseeble future, but to us this looks like a man robot. Automation is all what robots are predestened for, right?

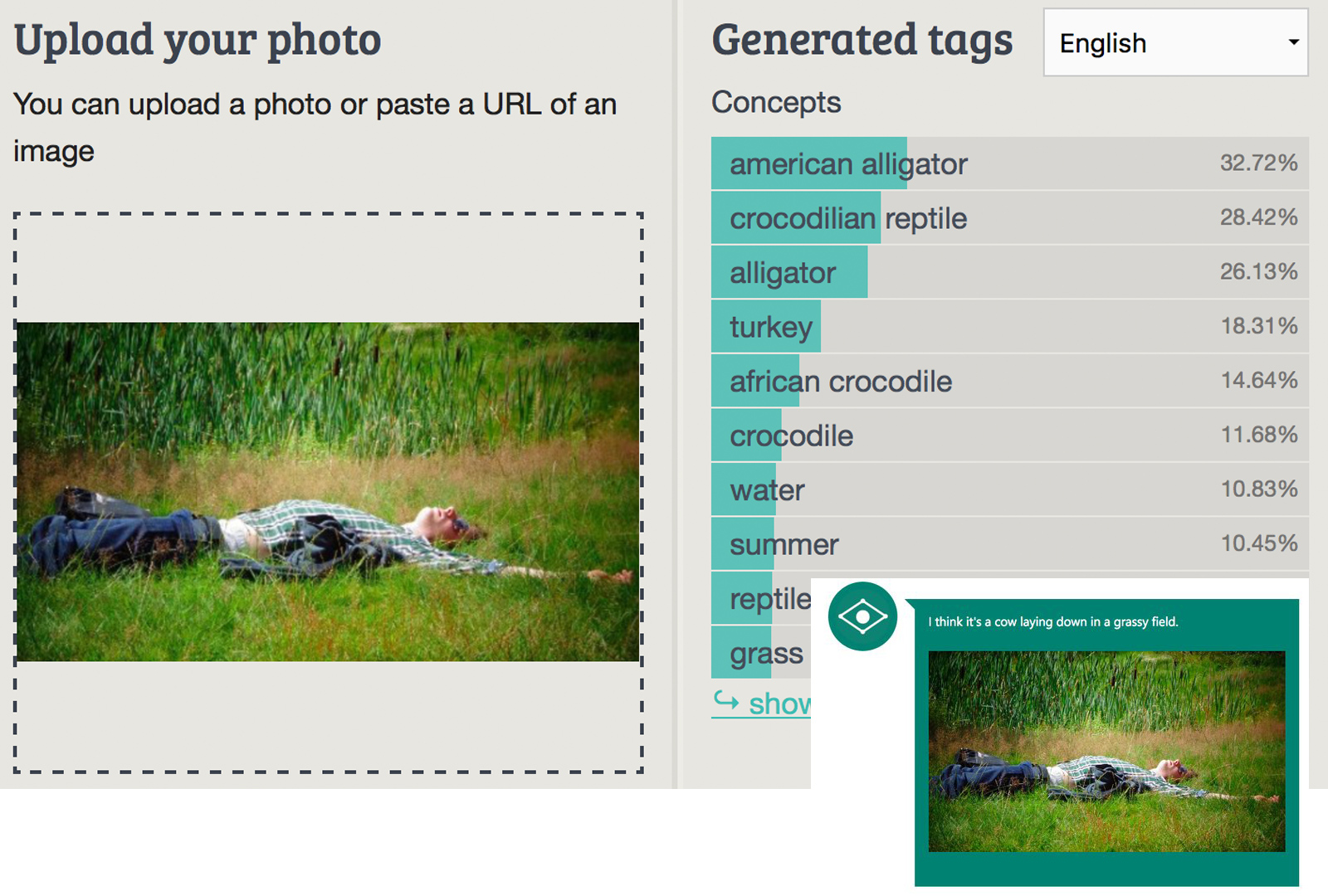

Can’t agree more that the person laying in the grass looks like an alligator. Tags like summer and grass bring a bit of balance to the results. If not accurate at least a bit closer then a cow laying down in a grassy field.

This one is quite though. What’s quite visible for a person to see, it’s not so visible for the machine, who’s lacking the context of the two mager objects on this photos.

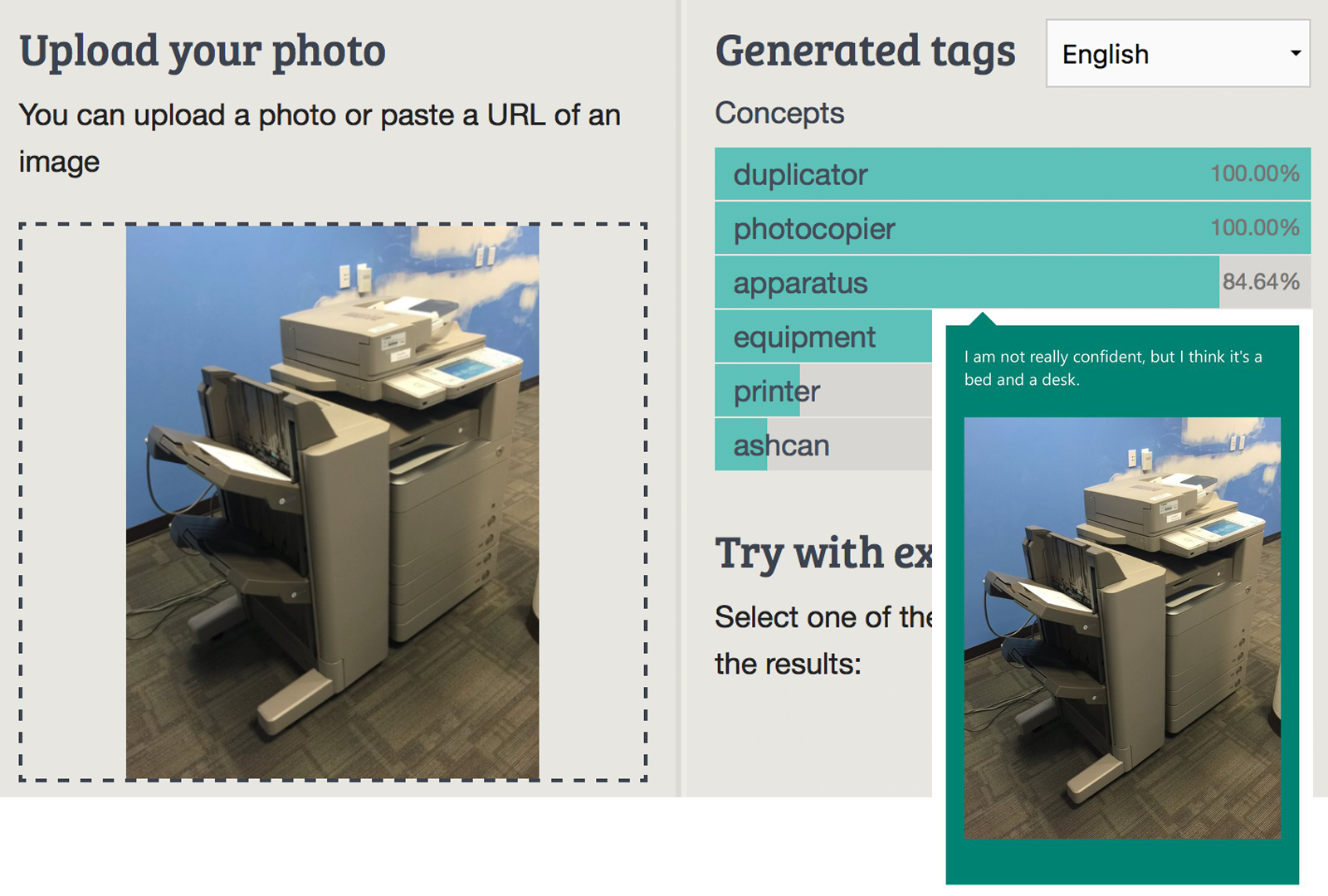

It’s great idea to put bed next to any desk in the office. May be one next to the copy machine will not be that good idea - to much noice and traffic arround it.

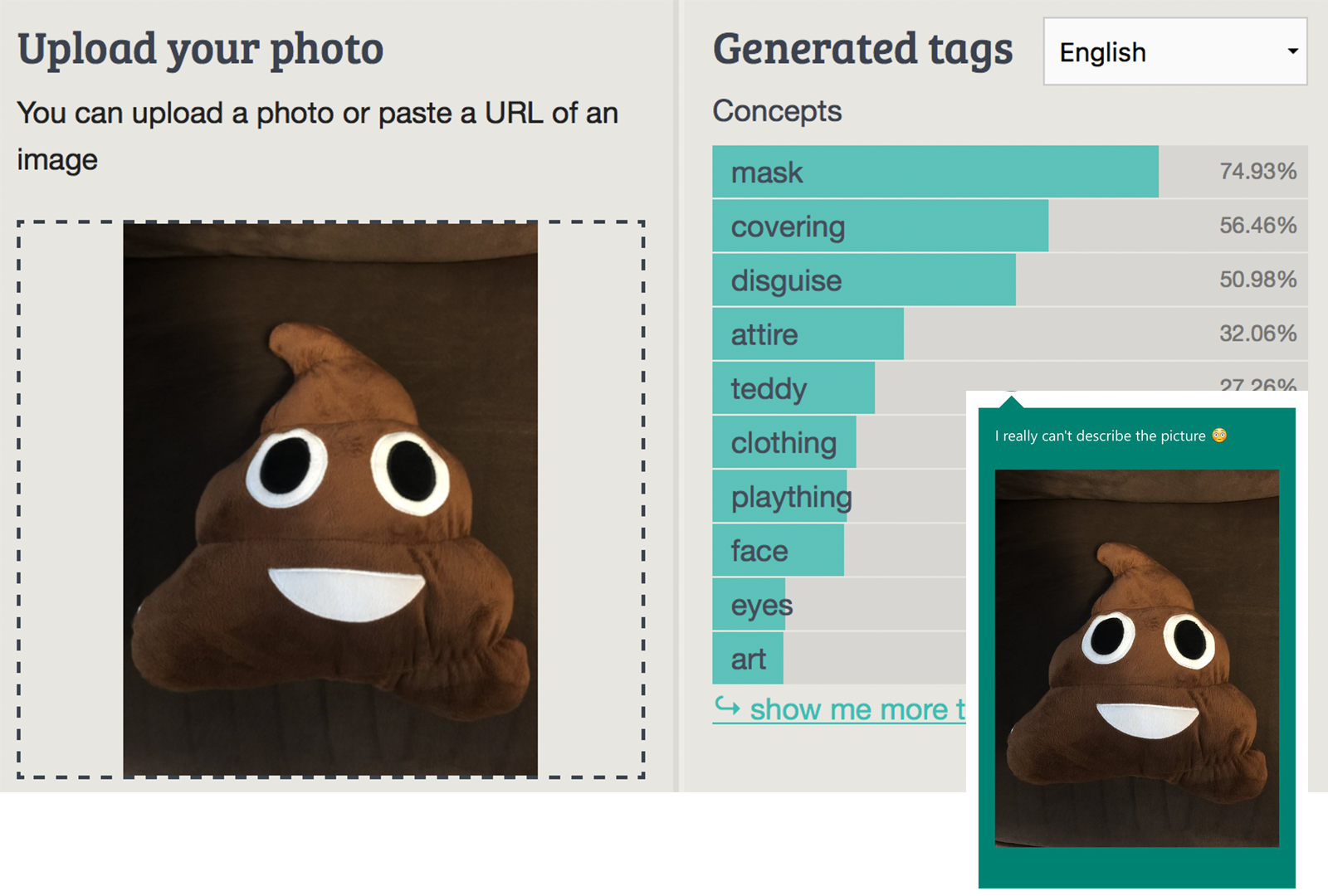

We’ve tried to describe this picture and results are not too bad ;-)

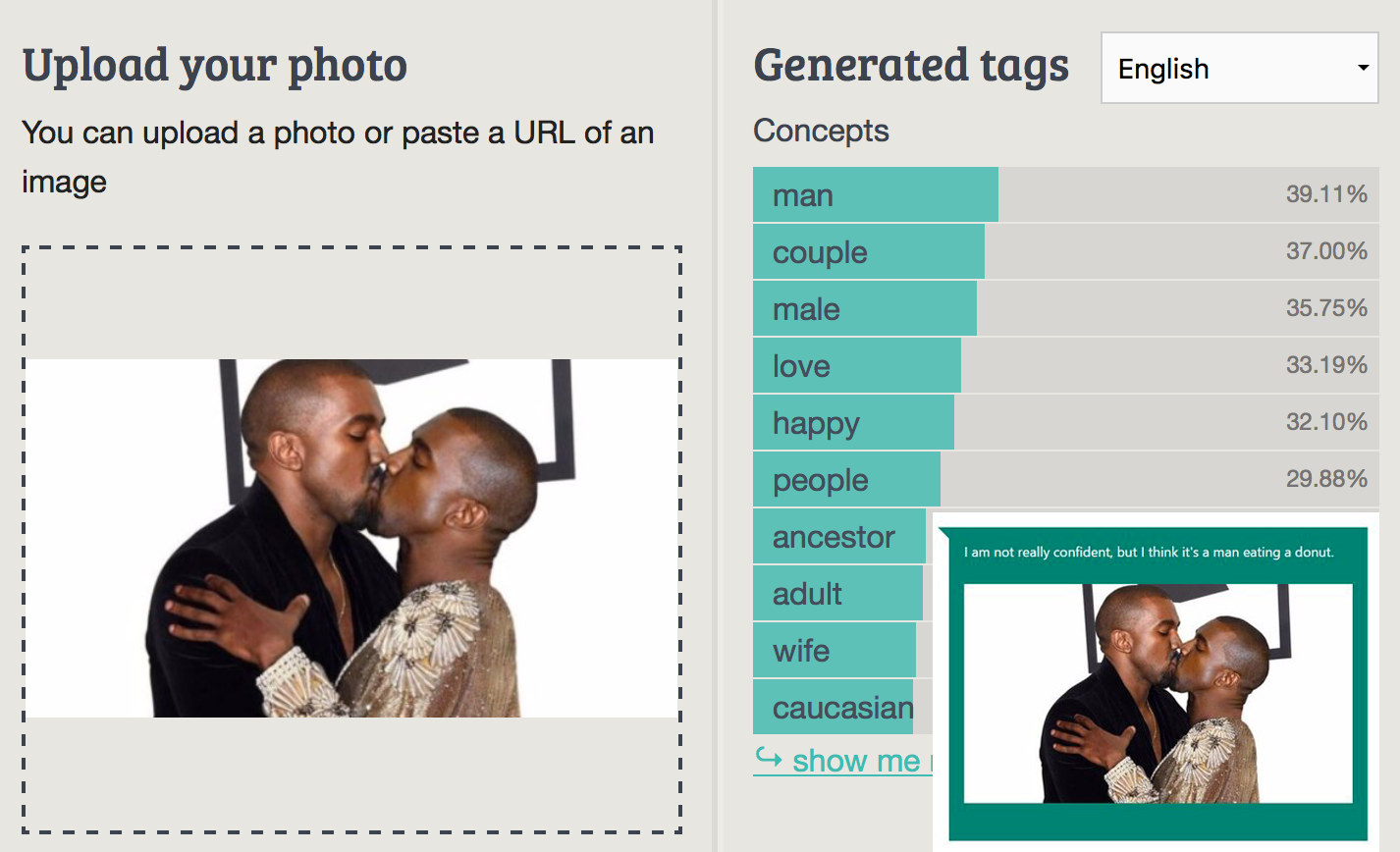

Last but not least, we do not see donuts but happy, male cople in love. Well, we’ve got a bit of spicy results with less confidence as wife and caucasian, but still…

Imagga Appointed One of the Eight Digital Champions of the UN’s Award for Digital Innovation With Social Impact

We are happy to announce Imagga was awarded a very special prize - being one of the 8 global champions of the World Summit Awards. Imagga is overall winner in the Media & News category.

The 8 WSA Global Champions are excellent example of how digital innovation can make a true social impact and solve important issues on both local and global scale. WSA acknowledges pioneering innovation in the field of digital content and aims to bring visibility to projects that can create sustainable social change and impact world-wide.

During the 3 days Congress in Singapore, the selected best of digital start-ups, young entrepreneurs and distinguished experts from around the globe met to learn from each other. The congress speakers shared outstanding examples and insights of how digital technologies drive the United Nations agenda for the Sustainable Development Goals (UN SDG’s).

On-site Grand Jury of 40 international ICT experts at the WSA Social Innovation Congress carefully selected the 8 WSA Global Champions.

It was super exciting to take part at the congress and meet innovators from all over the world. You rarely have the chance to spend quality time with group so diverse - UN and government officials, locals and expats, young and bright minds involved in amazing community and tech projects.

Chris was on stage the second day to showcase Imagga and how we are changing the way people handle digital images. It was awesome to see both jury and people in the room excited about the practical applications of Imagga’s image recognition technology. It’s always good indicator of your stage performance when people have questions, share ideas how you can improve it or just want to meet and talk to you.

The whole WSA experience was amazing. Besides weather being too humid and hot for the European taste, having an opening party by the hotel pool with amazing view to the city, listening to world class speakers during the congress, being served the best food Singapore can offer, and having the best and friendliest event organizers, we can boldly state WSA in Singapore was the coolest event we’ve attended.

Asia is a great market for technologies such as ours. Being there for such short time validated what we already knew - we need to spend more time and efforts to help companies from the region deal with and monetize better their digital content. We are in love with Asia and coming soon to do more business there.

https://www.youtube.com/watch?v=S1o_Q7m3jTc

Imagga Featured Hack: Hipster Bar

Hipster bar is where only hipsters are allowed! How do you reinforce that? With a physical doorman who’s job is to ruthlessly send back guys without beards, or, in the case of the Max Dovey’s project - using Imagga’s image recognition technology. The hipster bar was open to the public for the duration of WdW Festival 2015.

Let’s get into the details of this quite unique usage of Imagga’s powerful image recognition technology. To enter the bar, you need to stand in front of camera that snaps a photo of you and then sends it to Imagga servers. Then the tech analyses your look and as result returns how certain the system is you are a hipster.

If you are found over 90% hipster, the door of the bar will open and you can join great company of people that are hipster enough.

Hipster is quite loose term and usually is used to describe a subculture of people who attempt to keep up to date with the latest trend and remain 'hip'. These are men and women in their 20's and 30's that value progressive politics and independent thinking, and often have appetite for art and indie-rock & counterculture. Of course being hipster includes certain look - thick rimmed glasses, tight-fitting jeans, old-school sneakers, side-swept bangs and beards (men only).

Max Dovey, an artist from Rotterdam, who initiated the project, sourced thousands of images of hipsters to be used by our team to build a special hipster deep learning mode. The specific classifier was able to easily distinguish between snaps of hipsters and all the rest. Here’s how it actually worked:

https://vimeo.com/139604496

Have another crazy idea? Don’t hesitate to try it out - with our custom training only the sky is the limit... if you are hip enough ;)

Imagga Wins Porsche Hackathon

Hackathons are part of Imagga’s DNA - we love to hack and we definitely like to win. Porsche InnovationEngine hackathon took place in Salzburg, Austria on 8-10 June 2016. 12 teams all across Europe participated. We are happy to announce we won 1st prize but here’s a short report about this great event.

There were three tracks for the teams to complete - car recognition, financial optimization, the best car for me. It’s quite obvious we’ve decided to participate in the car recognition challenge.

Every great hackathon should start with nice dinner, and that’s what happened Friday night. As you can imagine we’ve been busy drafting the hack idea, meeting mentors and doing the actual hack. Literary we used the time and available napkins (great startup weapon to remember awesome ideas) to craft our winning strategy.

No surprise the next day we’ve digged straight into building our brilliant idea. Some mentors interrupted the process, but their insight on the car market was quite valuable. We’ve worked hard till midnight as we wanted to impress the jury with fully functional app including custom classification that was trained overnight.

During the final day of the hackathon we presented in front of 40 managers and 3 C-level executives (incl. the CEO) of Porsche Holding Group. Our demo went really smooth and all were impressed with the technology and that we were actually able to put together fully functional demo in such a short time.

Getting the first prize was such an honor and also an opportunity to work in that space - car discovery & search. Not to forget one of the perks winning Porsche hackathon - Audi Driving Experience! Looking forward to it!

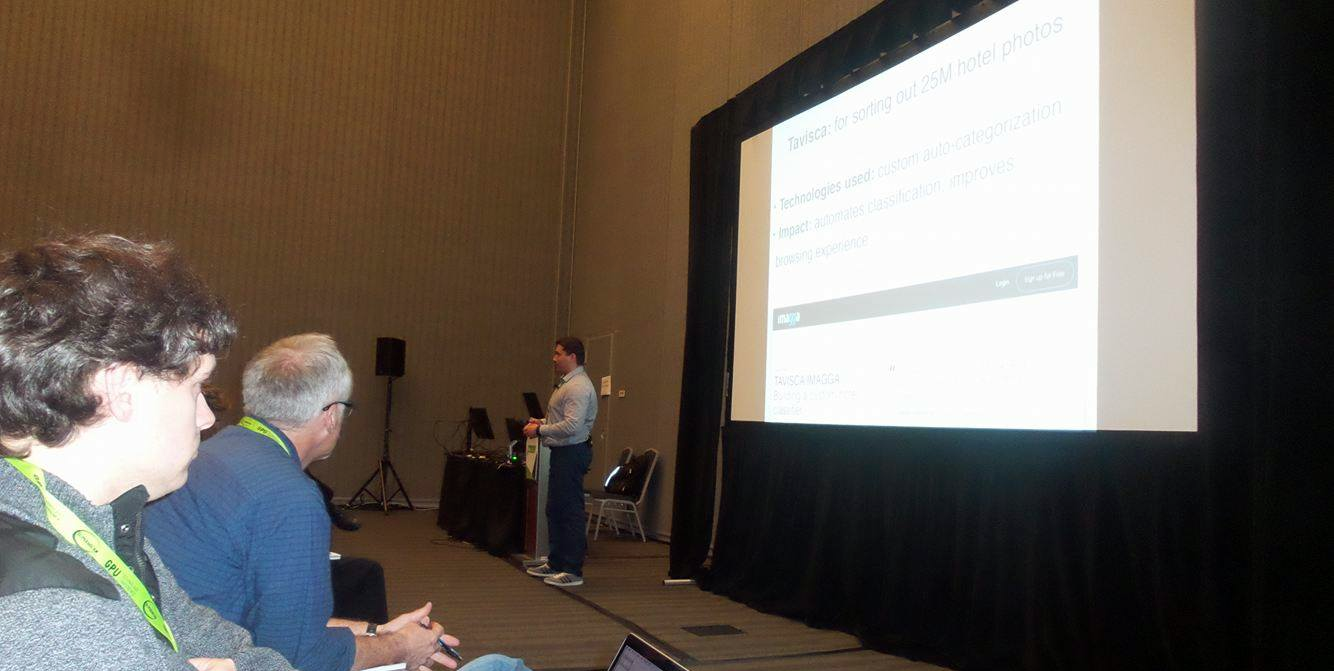

Imagga @ GPU Technology Conference

GPU Technology Conference organized by NVIDIA is one of the best events for the GPU related industries. Artificial Intelligence invades by storm all technology fields and becomes a frontrunner for innovation. This year GTC16 showcased that by focusing on deep learning, virtual reality and self-driving cars. Over 5000 engineers, scientists and entrepreneurs had the chance to hear from the best on the field and learn about the great advancements in terms of hardware and software. NVIDIA announced some really impressive hardware like the Tesla P100 and the NVIDIA DGX-1 (the world’s first deep-learning supercomputer powered by 8 cards that can speed up training times by over 12x). We are definitely going to consider it for our machine learning infrastructure in the foreseeable future.

Imagga was selected to present at the Machine Learning track about the practical use-cases for image classification and tagging. We’ve hand-picked a few particular and somehow diverse use-cases among the hundreds of companies and thousands of developers using the Imagga platform.

To give you a glance of the use cases we’ve used for our presentation, let's mention a few:

Unsplash used Imagga auto-tagging to reduce manual tagging of their amazing free stock photo offering and to enhance the search capabilities.

KIA Motors for quete precise user profiling based on Imagga’s auto-tagging and color extraction.

Tavisca for building custom trainer to automated categorization of over 25M hotel related photos.

Seoul National University for generating custom classifier for waste pre-sorting.

More than just showcasing, the aim of our presentation was to inspire people, to give them an idea how image recognition technology can be a game changer for industries that traditionally have been dependant on manual curation of image content.

It was amazing to see the audience involved in the subject, following up with question about the presented cases, as well as their own potential cases. We had the chance to meet existing and potential partners at the conference and to extend our network.

The organization was great and everything went quite smoothly. Thanks NVIDIA for the opportunity to be on stage and talk about the amazing product we are building with love from Bulgaria. We are looking forward to the next edition.

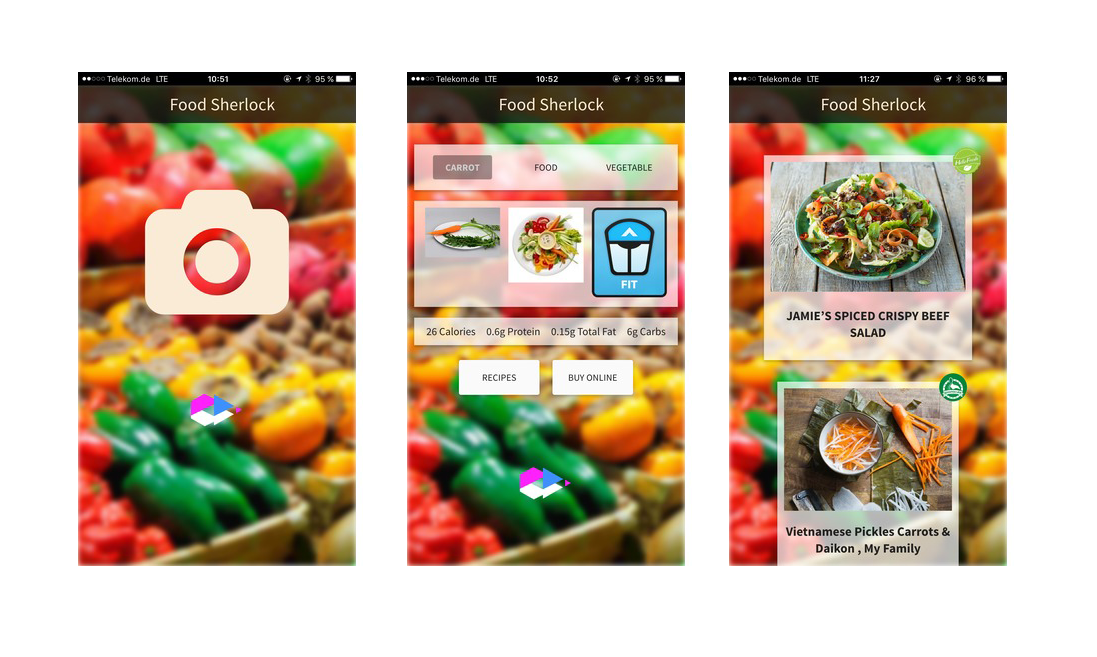

Imagga @ Food Hacks Berlin

We are super thrilled to be part of Food Hacks Berlin, organized by HackerStolz. The idea of the hackathon is to find digital ideas that can revolutionize the food industry. Food delivery process has seen lots of innovation in recent years, but it’s obvious there’s so much that can be done: better services related to what we consume daily, smart apps that automate the burden of manual calories intake and many more.

Over 100 participants from 20 countries hacked in Rainmaking Loft, one of the well known startup destinations in Berlin. It’s inspiring to see so many motivated hackers with various experience in so many industries. One of the reasons we love hacker events in Berlin is the amazing mix of talent and the diversity of the participants. There was a group of bright Ukrainians that travel by bus over 12 hours, people from Poland, Holland, UK, Switzerland to name a few.

Imagga was an API partner at the event. We’ve trained two special categorizers to help developers get the best out of our image recognition technology and to make sure they build amazing apps - meal classifier that can recognize over 300 cooked meals and food & veggies classifier.

Chris presented Imagga API at a dedicated workshop, talking about how you can use Imagga API to recgnize pictures of meals, fruites and veggies.

Amazing apps have been build during the weekend - from smart fridge that can find out what food is aging and needs to be thrown away and reordered to some quite creative ways to use food:

Neighborhood Cuisine - A mobile app that lets you get together with your neighbors to cook tasty recipes from your leftovers.

HelloFridge - the easiest way to save your ingredients from going bad by combining them to fit delicious recipes.

iTrash - the most intelligent trash can in the world

Surprise Meal - Provides surprise ideas for recipes.

Bulker - Early-morning bakery delivery that loves you

Super Quest - Food AR app for basic food education of children

Mint - A unique way to discover a new culture through music, art and food.

Vollkorntoast - New Shopping Experience

rcply - Search for recipes based on ingredients you already have at home

lekkerlekker - Your one stop shop for finding the best recipes and directly buy alls groceries you need.

VR-ify - Efficient food testing and prototyping process with VR and brain activity scanner

TraceYourFood - See the journey of the food you consume!

KeineWaste - KeineWaste connects food businesses and volunteers in real-time, converting food waste into food donations.

WTF: What the FOOD - So you'll never run out of müsli again.

Our favorite hack is Food Sherlok, developed by Team Baker Street ;-) Food Sherlok lets you take a photo of any ingredient and gives your nutrition details, recipe suggestions and the possibility to buy it online. The mobile app utilizes Imagga API for the image recognition part, Spoonacular API for the nutrition details and HellowFresh API for the recipe suggestions and possible food delivery.

Here you can find the code of the hack.

Imagga prize - GoPro camera, t-shirts and free API access went to Team Baker Street for their incredible work and valuable feedback.

“Getting an idea took some time, so the first hours we decided on an idea, but it took another hour until it was clear for me what I had to do and how it should look like. Uploading the image and resizing it on the frontend was also harder than I thought, just because some of the APIs expect form data for images."

You can’t do much for the limited time of the hackathon, but the team is already making some bold plans for Food Sherlock. They think it will be quite useful if they can generate location based sate for retailers, work on improving the image recognition service with data specific for different geographic locations, experiment with new ways to visualize the results (may be adding something like search heat map overlay) and offer text to speech recognition to make sure refugees can also use the app.

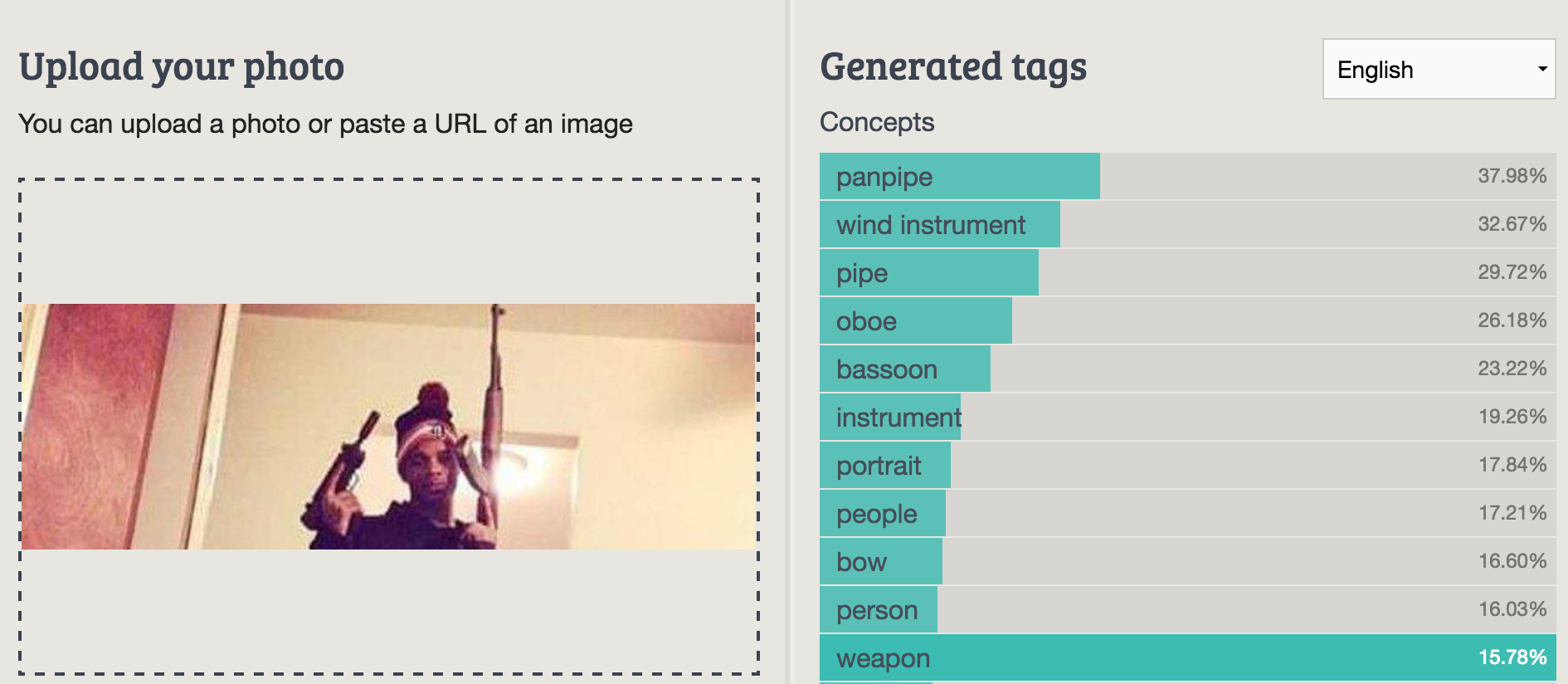

Imagga Featured Hack: Automatically Finding Weapons in Social Media Images

Weapons are controversial topic, especially in zones of conflict. A lot of photos of people holding weapons are posted daily in social media. Analyzing the concentration and the motives for posting this kind of photos can eventually hint to a trend or some potential problems.

In a recent post Justin Seitz showcases a simple hack for discovering photos of weapons on social media using Imagga’s image recognition API. Not all photos of people with weapons are being detected by our general tagging API, but there’s a simple explanation behind that - the API hasn’t been specifically trained for that task. Still, it’s quite amazing we are detecting various types of weapons in different context.

Applications of this are numerous: detecting concentration of weapon related photos and possible militarization in certain area, unauthorized/hazardous use of weapons, including by children to name a few.

Justin has also managed to improve the results by cropping some of the images in advance, so they are more concentrated on the eventual weapon. In the upcoming updates of our API this will be solved even better - we are releasing positional tagging of objects soon and besides having the actual position this will also improve the object-level recognition, stay tuned!

In addition to the very interesting use-case Justin’s article is a great practical guide on how to use the Imagga’s auto-tagging API so make sure to check it out.